5 Practical Lessons from the Human-Centered AI in Higher Ed Series

Catch up on Packback’s 5-part series on Human-Centered AI. See why higher ed is shifting away from AI policing and focusing on making student thinking visible.

Trying to ban and police students using generative AI isn’t working. It’s a losing game that drains faculty time, creates massive governance headaches for provosts, and, fractures the fundamental trust between instructors and students.

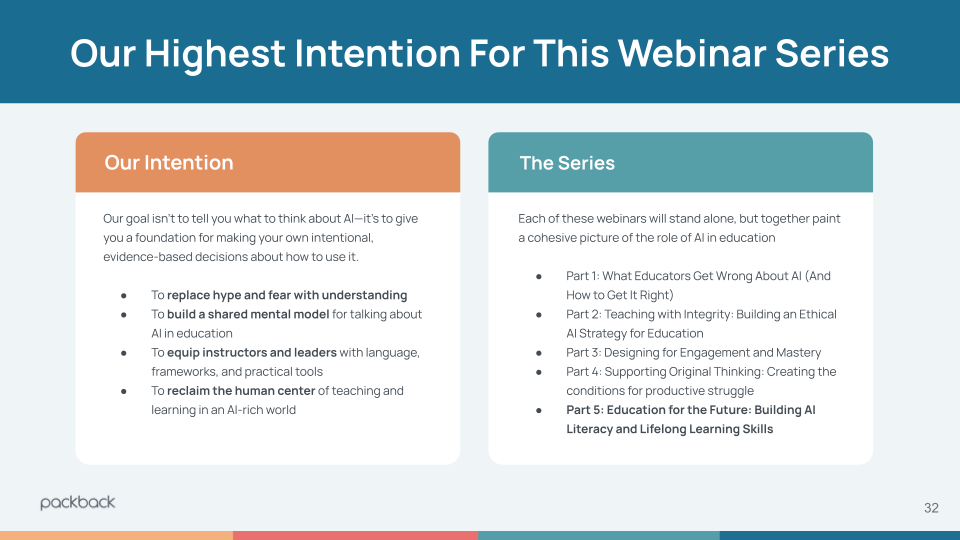

To address this, Packback hosted a 5-part interactive webinar series bringing together instructional designers, administrators, faculty, and AI experts to figure out what actually works. We call it the Human-Centered AI in Higher Education series. Over the course of five hours, thousands of educators from across the country joined us once a month to share their real-world classroom experiences.

The resounding consensus? We have to stop policing the final output and start evaluating the learning process. We must make the thinking process visible.

If you didn’t have a chance to attend the live sessions, here is a deep dive into the biggest lessons learned, backed by the data and voices of the educators who attended, and how your institution can implement a culture of visible, authentic learning.

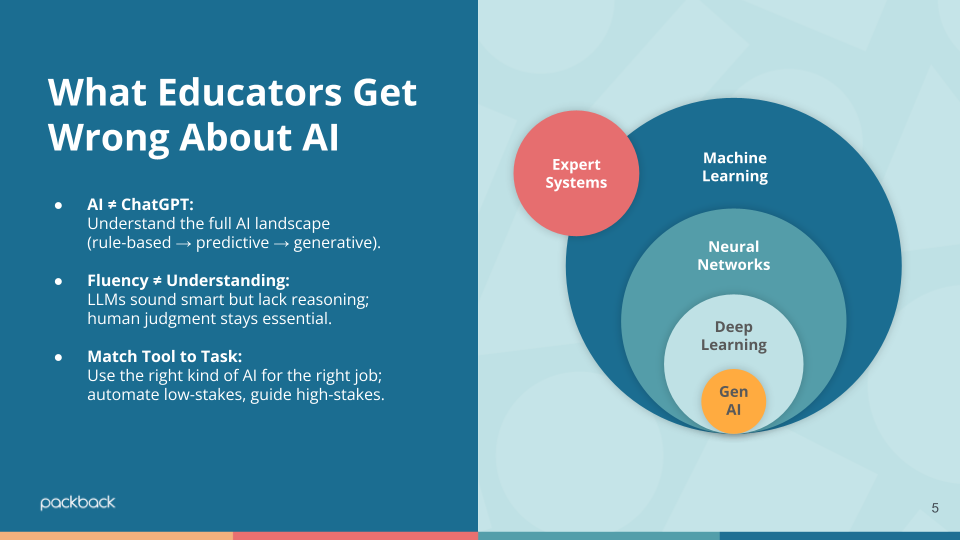

Lesson 1: Not All AI is Created Equal

From Part 1: What Educators Get Wrong About AI (And How to Get it Right)

In our kickoff session, reframed the AI conversation from a search for “truth” to a search for explainability and AI literacy.

When we treat AI as a “black box” that either catches or facilitates cheating, we miss the actual opportunity of teaching students how these models function so they can use them responsibly. AKA: AI literacy.

AI literacy starts with understanding the authorship process.

During the session, an attendee, Professor F. Cesa, shared a terrible epiphany about how she adapted her essays. She requires students to use a Google Doc to show their notes and outlines, telling them she expects to see the process and at least one round of editing.

“By doing this,” Fabiana shared, “I discovered that most students

do not have a process and do not rewrite or edit.”

This is an “Aha!” moment for AI literacy. The tension we feel around generative AI is a process gap. When students lack a reliable method for drafting, writing, and refining ideas, they panic and turn to AI to fill the void. By focusing on Instructional AI with tools that coach the student through the “messy middle” of writing, we move the needle from passive consumption to active engagement. When we make the process a required, visible part of the grade, the urge to bypass the work diminishes.

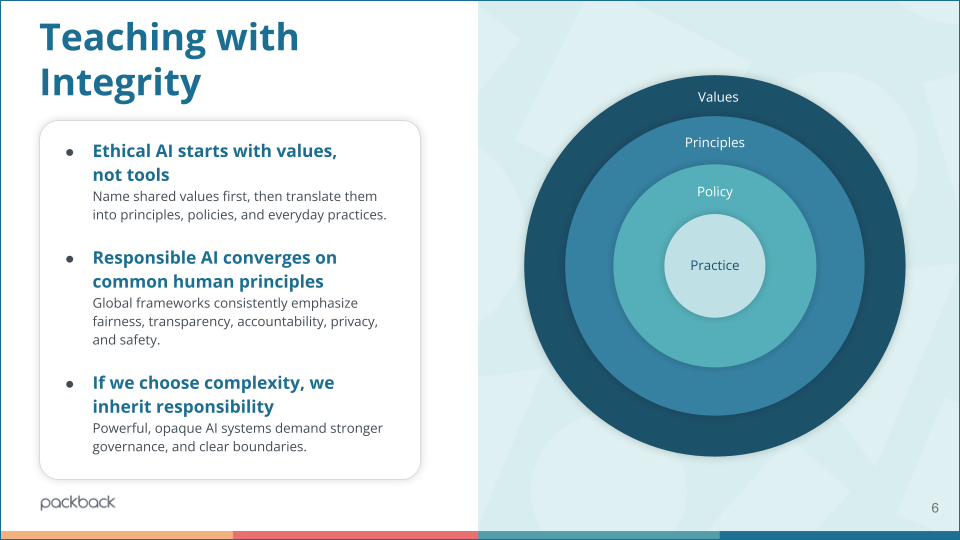

Lesson 2: Policy Isn’t Enough; We Need a Framework

From Part 2: Teaching with Integrity in the Age of AI

Many administrators hope a strong institutional policy will serve as a safety net. But our live audience data suggested otherwise.

When we polled the audience during Session 2, 55% of attendees said they felt “Uncomfortable” or “Somewhat Uncomfortable” relying on their institution’s AI policy to navigate a difficult situation with a student.

Why the lack of confidence? Because a policy is a rule, but teaching requires a framework. Dr. Rufus Glasper, President and CEO of the League for Innovation in the Community College, joined us to co-host the discussion on how to bridge this gap.

The data from our second poll revealed a massive opportunity for faculty enablement: 34% of attendees said they are already embedding AI into assignments, but a staggering 28% said they want to teach AI literacy skills but don’t feel equipped or supported yet. Institutions cannot mandate their way out of this; they have to provide scalable professional development to help faculty redesign their rubrics.

Lesson 3: Designing for Productive Friction

From Part 3: Designing for Engagement in the Age of AI

AI is incredible at making things easy. It can generate an essay, summarize a reading, or write a discussion post in seconds. But in education, “easy” isn’t the goal. Effortless completion bypasses actual cognitive load.

In Session 3, we dug into course architecture. Packback’s Director of Product, Oliver Short, introduced the concept of “productive friction.” The goal is to design for engagement by requiring students to show their work through touchpoints that AI can’t fake.

When we asked the audience where they are currently seeing the strongest student engagement, the answers were telling: 33% said applied or real-world tasks, and 30% said discussions or debates. Very few said standard essays.

This requires building what we call the “Human-in-the-Loop” into course design where meaningful peer-to-peer interactions, live defenses, and self-reflection happen.

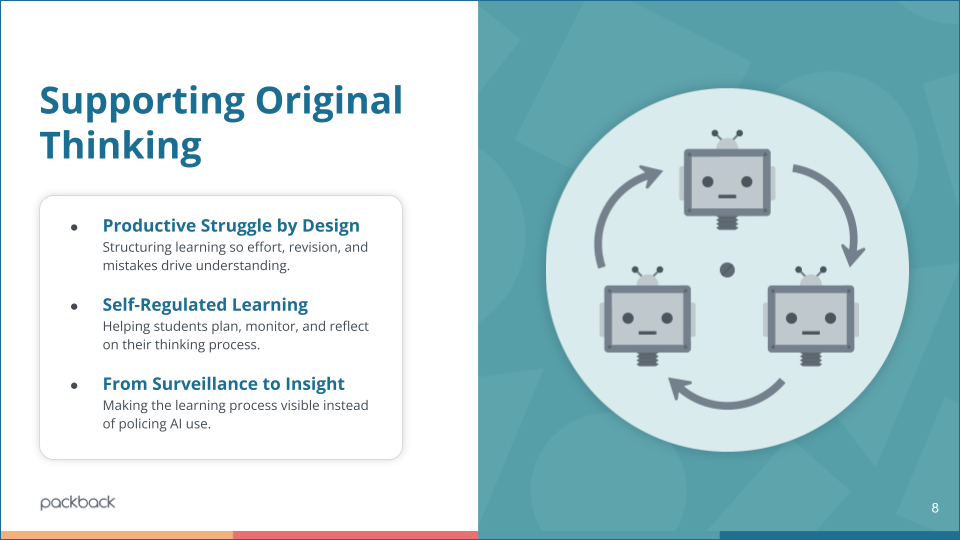

Lesson 4: Stop Grading the Final Draft; Grade the “Messy Middle”

From Part 4: Supporting Original Thinking: Making AI a Partner in Learning

Original thinking doesn’t emerge fully formed in a polished final draft. It happens in the “messy middle” with first drafts, rough outlines, peer feedback, and revisions.

Dr.Cynthia Wilson, Chief Impact Officer at the League for Innovation in the Community College, joined us to co-host the discussion and provide guidance for a better path forward.

To better frame and add credibility to this discussion, we shared data from a recent Packback survey of 691 students. The data showed that students are hiding their process because they fear being falsely accused of cheating if they use AI simply to brainstorm or outline.

We asked our faculty audience: “In your class, how visible are the ‘messy middle’ stages of a project?”

- 35% said it was “Private but Active” (only discussed 1-on-1).

- 23% said it was completely “Hidden” (students only show polished work to avoid losing points).

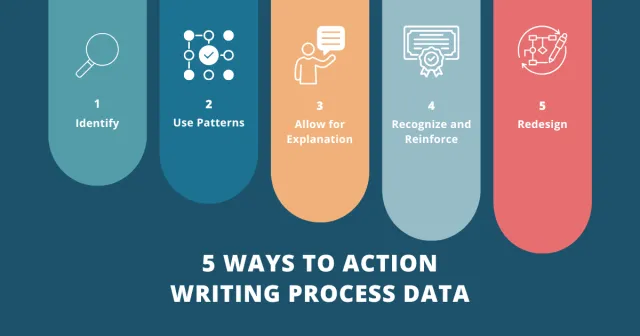

If we want to understand how students are engaging with writing, we have to start evaluating the messy middle. If faculty can see the authorship timeline with the notes, the edits, and the collaboration, the final product becomes almost irrelevant to the integrity conversation. The process is the proof of learning.

Attendee Tami J. summed up the energy in the chat perfectly: “Love the HUMAN LOOP!!! We need to create more touchpoints and a learning environment where our students do not feel intimidated by reaching out to their instructors.”

Lesson 5: Turning Discussion Into Action

From Part 5: Preparing Education for the Age of AI

In our final live panel, we brought everything together to ask: What next? Dr. Heidi McLaughlin, Associate Professor at CSU Bakersfield, hit on the most critical adjustment institutions need to make moving forward:

“You can’t use a 2019 rubric for a 2026 assignment. We have to adjust our rubrics to actually reward the process of revision and critical thinking, rather than just grading a polished PDF.”

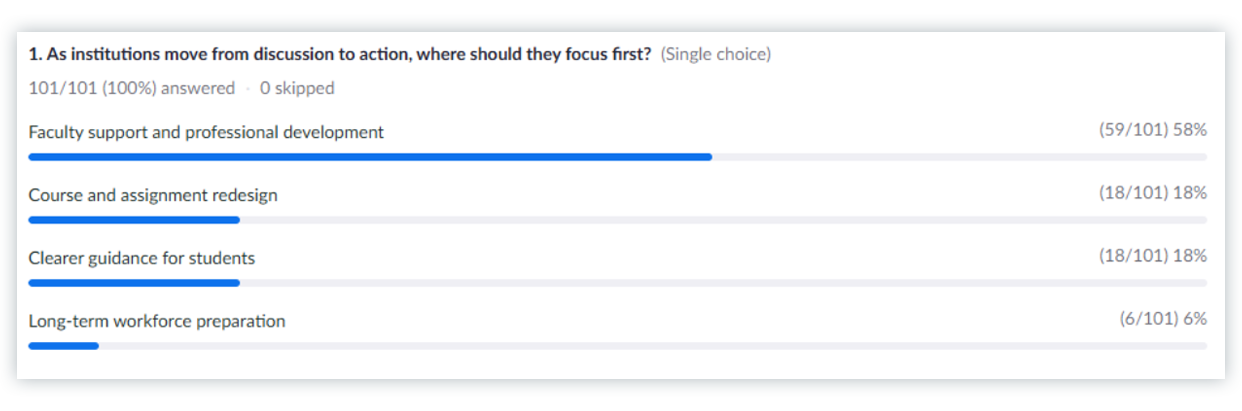

But faculty cannot do this alone. The path forward requires institutional investment. When we polled the audience on where institutions should focus their energy first as they move from discussion to action, a massive 58% said Faculty Support and Professional Development. (Course redesign came in a distant second at 18%).

Institutions must invest in tools and training that allow faculty to easily see the student’s learning journey without adding dozens of hours to their grading workload. Whether that is utilizing tools like Packback’s upcoming Engagement Insights to see a visual timeline of a student’s edits, or experimenting in environments like Packback Labs where students actually reverse-tutor a naive AI to prove their mastery.

What’s Next? Get Certified.

We covered a lot of ground in this series, from rethinking syllabus statements to entirely redesigning course rubrics. To make this as easy to implement as possible, later this month we are packaging all 5 sessions, the slide decks, and our best assignment templates and institution guides into a comprehensive, self-paced course (complete with a LinkedIn badge!). Submit the below form and we’ll shoot you an email when the certification course opens up in late April 2026.