Webinar Recap – Preparing Higher Education for the Age of AI

Discover how higher ed leaders are ditching AI detectors and instead evaluating the “messy middle” of the writing process to build critical thinking and prepare students for the future.

Over the course of our Human-Centered AI in Higher Education webinar series, we’ve covered the theory, the ethics, and the pedagogy of this new frontier.

But theory only gets you so far. What does it actually look like to put a human-centered AI strategy into practice on your campus?

For the finale of our 5-part series, we brought together Dr. Heidi McLaughlin (CSU Bakersfield) and Kristy Duggan (WSU Tech), alongside Packback’s Craig Booth (CTO) and Barbara Kenny (Principal Product Manager, R&D). Their consensus was clear: the future of ai in education will be dependent on building better windows into how students think.

Faculty Support is the Missing Link

Administrators cannot simply hand down a new AI policy, cross their fingers, and expect magic.

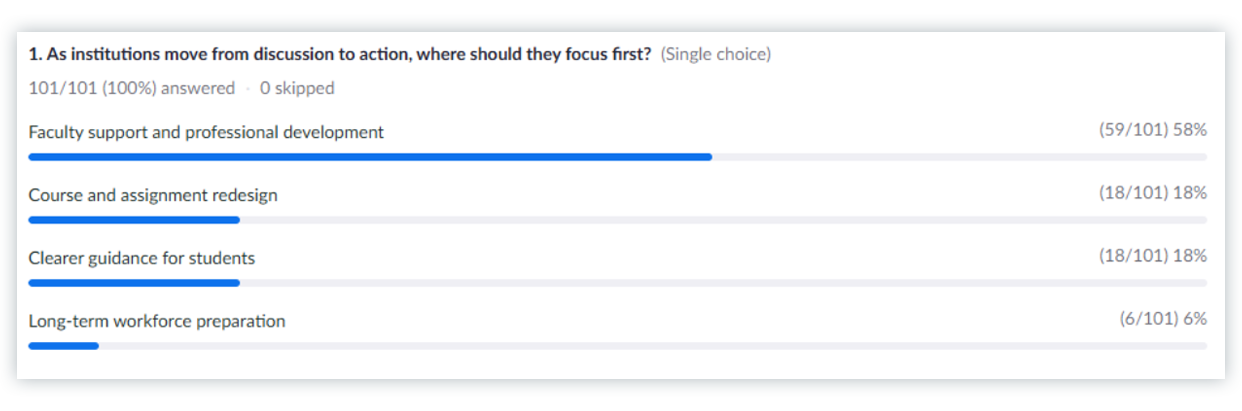

When we polled our live audience of educators and academic leaders, we asked: “As institutions move from discussion to action, where should they focus first?” The response was overwhelming. A massive 58% said Faculty Support and Professional Development, while only 18% pointed to Course Redesign.

The Takeaway: If you want faculty buy-in, you have to equip them for the transition. Mandates without support trigger governance blowback. Faculty need the time, the training, and the right ai tools for higher education to understand how to design assignments that aren’t easily outsourced to ChatGPT. Crucially, these tools need to save them time and allow them to make writing and discussion assignments work at scale without feeling like the institution is dictating how they teach.

You Can’t Use a 2019 Rubric for a 2026 Assignment

For the last three years, the reactions to generative AI have been all over the place. They range from worries about policing and detection, to wondering how students are completing assignments, considerations of banning it all together, and wondering how to avoid cognitive offloading.

Dr. Heidi McLaughlin shared a critical expert insight:

If you are still grading just the final, polished PDF,

you are inviting AI shortcuts.

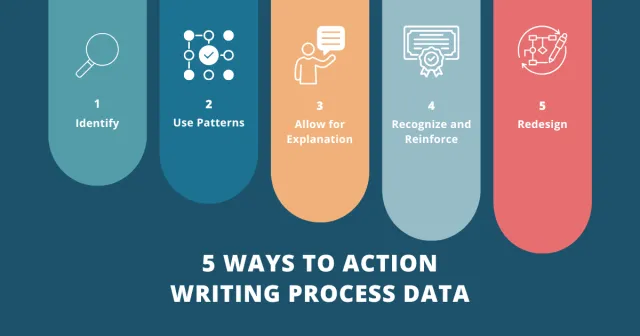

The strategic shift? Lead with the authorship process.

Rubrics must be adjusted to assess the student’s process, their edits, and their ability to critique AI-generated content.

We have to grade the “messy middle”

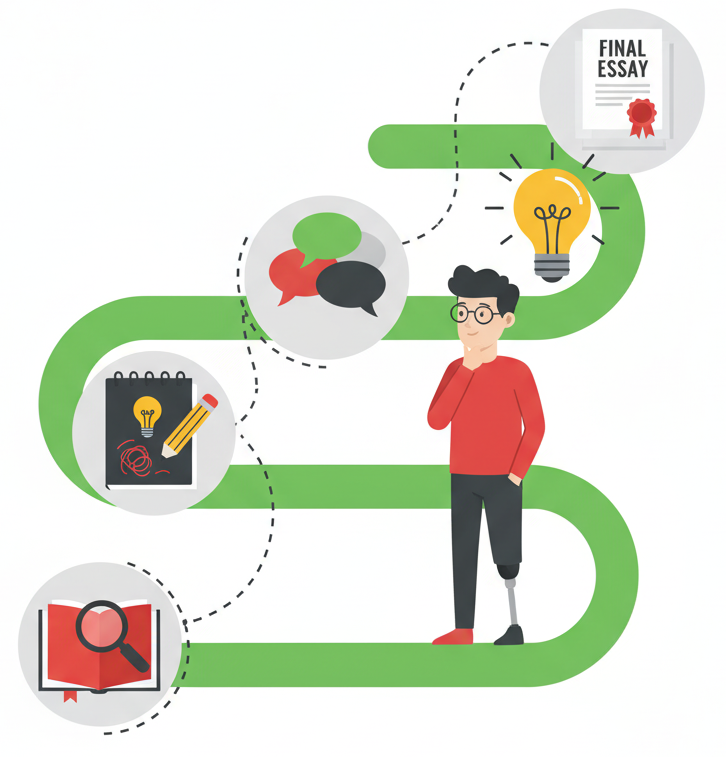

By instrumenting the writing process through outlines, drafts, revision timelines, citations, and feedback actions, faculty can evaluate authentic effort and learning progression. It protects the institution from the governance risk of false accusations, and it protects students from feeling subjected to algorithmic surveillance.

Building the 4 C’s (And How to Test Them)

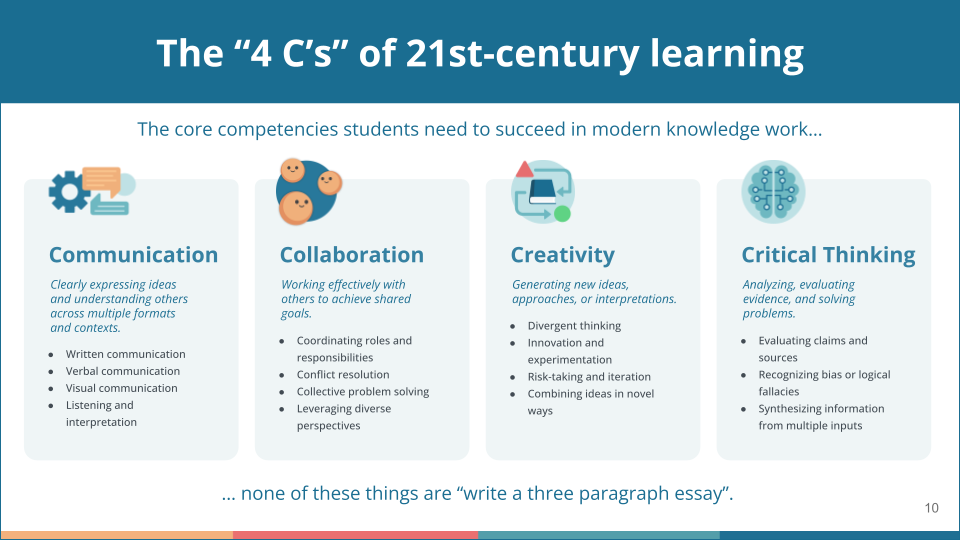

Ultimately, our goal is student success and retention. To achieve that goal, we need to focus on the 4 C’s framework: Critical Thinking, Collaboration, Creativity, and Communication.

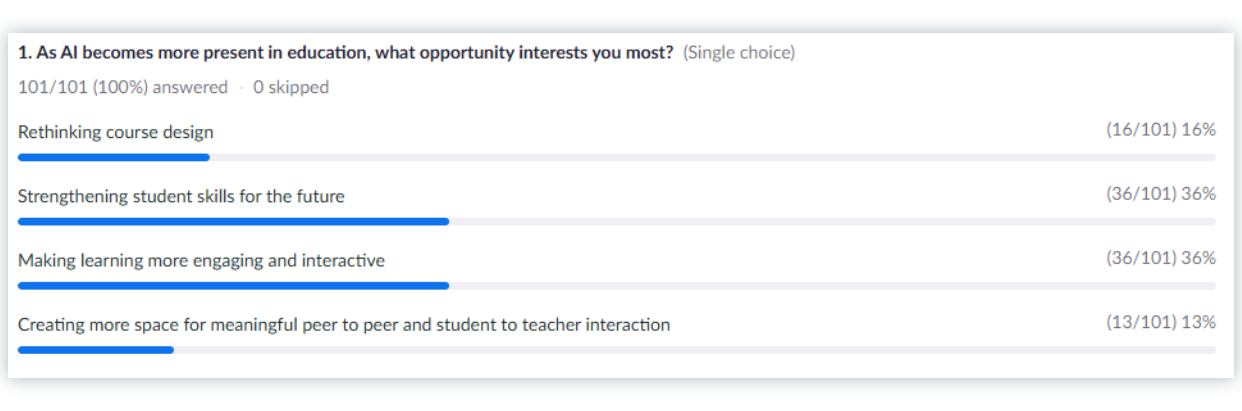

When we asked our audience what AI opportunity excited them the most, 36% said strengthening these student skills for the future. But how do we actually do this? How do we assess critical thinking in an age where AI can write a B+ essay in four seconds?

During the panel, we showcased Packback Labs, which offers entirely new, transparent ways for students to interact with AI:

- Teach the AI: In this reverse-tutoring simulation, students guide a naive AI through a topic, correcting its generated misconceptions along the way. The student is then assessed based strictly on that dialogue.

- AI Co-Authored Essay: Students build their draft with AI in stages. Instructors get a simplified “show your work” view, allowing them to see a visual timeline of student effort that highlights exactly where they accepted the AI’s help and where they stepped in to make changes.

By shifting the focus from the final product to the process of co-creation, we align the needs of the Provost, the CIO, the Faculty, and the Student.

No data silos, no false accusations, no busywork. Just authentic, visible learning.