The AI Trust Gap in Higher Ed: Why Student AI Use Is More Complicated Than It Seems

Higher education has spent the last two years asking a version of the same question: How much are students using generative AI to cheat?

Higher education has spent the last two years asking a version of the same question:

How much are students using generative AI to cheat?

Some students are using AI in ways that may raise concerns. Others are using it for support, like getting unstuck, clarifying concepts, or organizing ideas. The challenge for institutions is learning how to distinguish between AI use that supports learning and AI use that replaces it. This ambiguity, coupled with high-stakes concerns about cheating, creates an environment where students and faculty struggle with suspicion and uncertainty, creating a gap in trust.

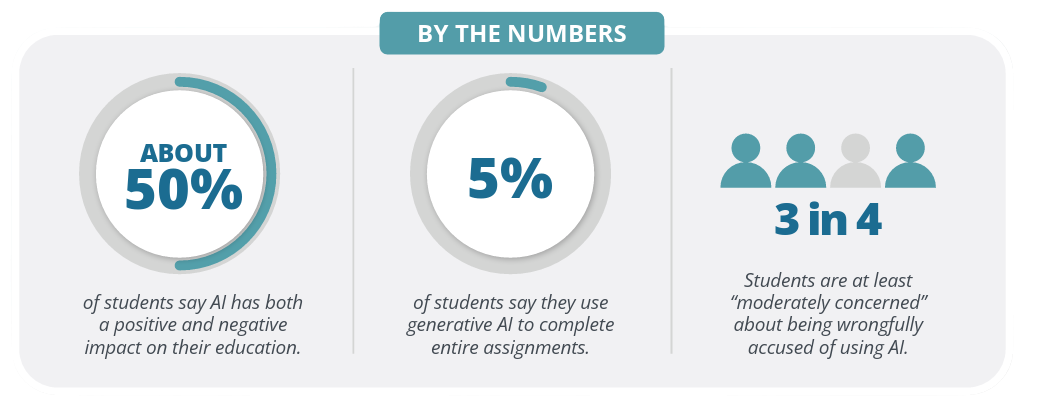

Packback’s new ebook, The AI Trust Gap in Higher Ed: What 691 Students Reveal About Generative AI, Academic Integrity, and Learning, explores that gap with a more nuanced approach. Based on a January 2026 survey of 691 students, we dig into how students say they are using AI, how they think their peers are using it, and what that gap means for teaching, learning, and trust.

AI Misuse Is Only Part of the Story

Academic integrity concerns are real and institutions should take them seriously. But, when every conversation about AI starts and ends with misconduct, higher education risks missing the larger issue. Students are navigating AI in an environment where expectations are often unclear.

Some students are using AI in ways that may raise concerns, but the vast majority are using it for support, like getting unstuck, clarifying concepts, or organizing ideas. The challenge for institutions is learning how to distinguish between AI use that supports learning and AI use that replaces it.

That distinction and the data supporting it is what this ebook centers on.

The Real Issue Is Trust

The data collected in our survey of 691 students points to a growing mismatch between student behavior, student perception, and institutional response.

More often than not, students in this survey claim they are rarely if ever using AI to completely offload the thinking process, but instead say they are using it as a partner. At the same time, students often believe their peers are using AI more aggressively than they are. That gap between perception and reality can shape classroom culture just as much as actual behavior.

When students think misuse is widespread, fairness feels uncertain. When faculty feel unsure about student authorship, trust erodes. When policies are vague or enforcement feels punitive, confusion grows.

That is the AI trust gap.

Why Higher Ed Needs a Better AI Strategy

Detection and enforcement have a role to play, but they cannot be the whole strategy.

Higher education needs clearer expectations, stronger pedagogical guidance, and more visibility into the learning process. Students need to know where acceptable support ends and substitution begins. Faculty need tools and policies that protect rigor without turning every assignment into an investigation.

The path forward is not blanket adoption or blanket restriction, but a more intentional approach to AI that keeps learning, critical thinking, and faculty judgment at the center.

Download the Ebook

The AI Trust Gap in Higher Ed takes a deeper look at what 691 students reveal about generative AI, academic integrity, peer perception, and learning.

Inside, you will find student survey data, key perception gaps, and practical implications for higher ed leaders working to build responsible AI strategies.

Request your copy of The AI Trust Gap in Higher Ed to explore the full findings