Webinar Recap: What Student Engagement Looks Like in the Age of AI

Stop playing AI cop. See the 5 key takeaways from our webinar on how how making student thinking visible can drive a 17% increase in retention in the age of AI.

This blog recaps our webinar, “What Student Engagement Looks Like in the Age of AI” – Watch it in its entirety HERE.

The landscape of higher education is currently defined by a high-scrutiny budget environment and the rapid evolution of generative AI. The primary concern is no longer just “cheating.” The real risk is campus controversy, faculty burnout, and the potential for false accusations that trigger governance blowback.

We recently explored these challenges in our latest session, What Student Engagement Looks Like in the Age of AI, co-hosted with Dr. Cynthia Wilson from the League for Innovation in the Community College. The discussion focused on how institutions can align student success with academic integrity by focusing on the authorship process.

Here are five takeaways from our recent session on how to anchor engagement in an era of automation.

1. How does AI impact student engagement and retention?

We often treat engagement as a “nice-to-have” metric, but the data suggests it is a survival requirement for institutions. According to research by Kuh et al., a single standard deviation increase in student engagement raises the odds of second-year retention by 17%.

When students are actively involved in the process of developing their own ideas, they are more likely to persist and graduate. In an era where AI can generate a final product in seconds, cultivating this deep engagement is a requirement for maintaining enrollment and proving ROI on department purchases.

When students feel their unique process is valued, they stay.

2. What is the “Learning Iceberg” in student writing?

Oliver Short, Packback’s Senior Director of Product, highlights that we often only see the 10% of learning that exists above the surface. This is the final essay or the “output.”

The other 90% is the “messy middle” where students organize thoughts, develop a point of view, and navigate productive struggle. If instructors only evaluate the final document, they lose the evidence of human effort.

“Engagement is an outcome, it’s not an output.

It happens through organization of thoughts, development of a point of view, and through productive struggle.”

– Oliver Short

By visualizing the writing process like the outlines, drafts, and revision timelines, instructors can see the thinking that AI usually obscures.

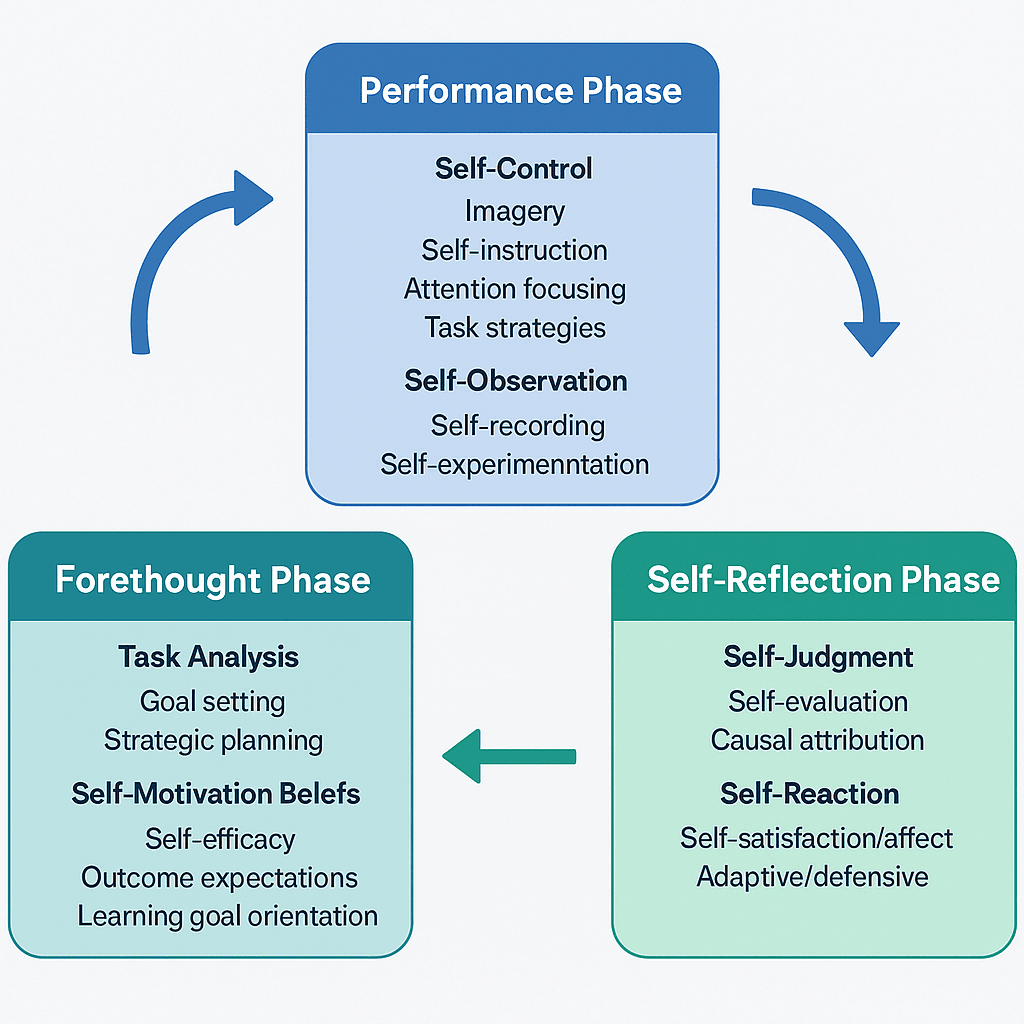

3. What is Zimmerman’s Model of Self-Regulated Learning in writing?

While AI tools change weekly, human cognition does not. Packback anchors its technology in Zimmerman’s Model of Self-Regulated Learning (SRL). This framework breaks learning into three critical phases:

- Forethought: Planning and goal setting.

- Performance: The actual “thinking” like drafting and monitoring of progress.

- Self-Reflection: Evaluating the outcome and how the student reacts to feedback and revises.

By providing visibility into these three phases, faculty can intervene before a student feels the desperate need to outsource their thinking to a chatbot. This is how we build durable learning habits that allow students to use AI productively rather than dependently.

4. Why is process visibility important in AI education?

A fascinating data point from our Inside Higher Ed Student AI Sentiment Report reveals a massive trust gap on campus. While 80% of students report they never use AI to write a full assignment, only 12% believe their peers are being equally honest.

This erosion of trust is why “gotcha” tools are so damaging. Students who spend hours on an assignment are terrified of being falsely flagged. We need tools that serve as a bridge that allows students to prove their effort through transparency.

Not only that, our live poll revealed a striking consensus among your peers: 49% of educators say that verifying the student’s process is a high priority, secondary only to the quality of the writing itself.

However, the barrier is always faculty “bandwidth.” Instructors will adopt new tools only if they save time. The is to provide a “show your work” view that allows faculty to assess authentic effort in seconds rather than hours.

5. How does Packback Engagement Insights verify authorship?

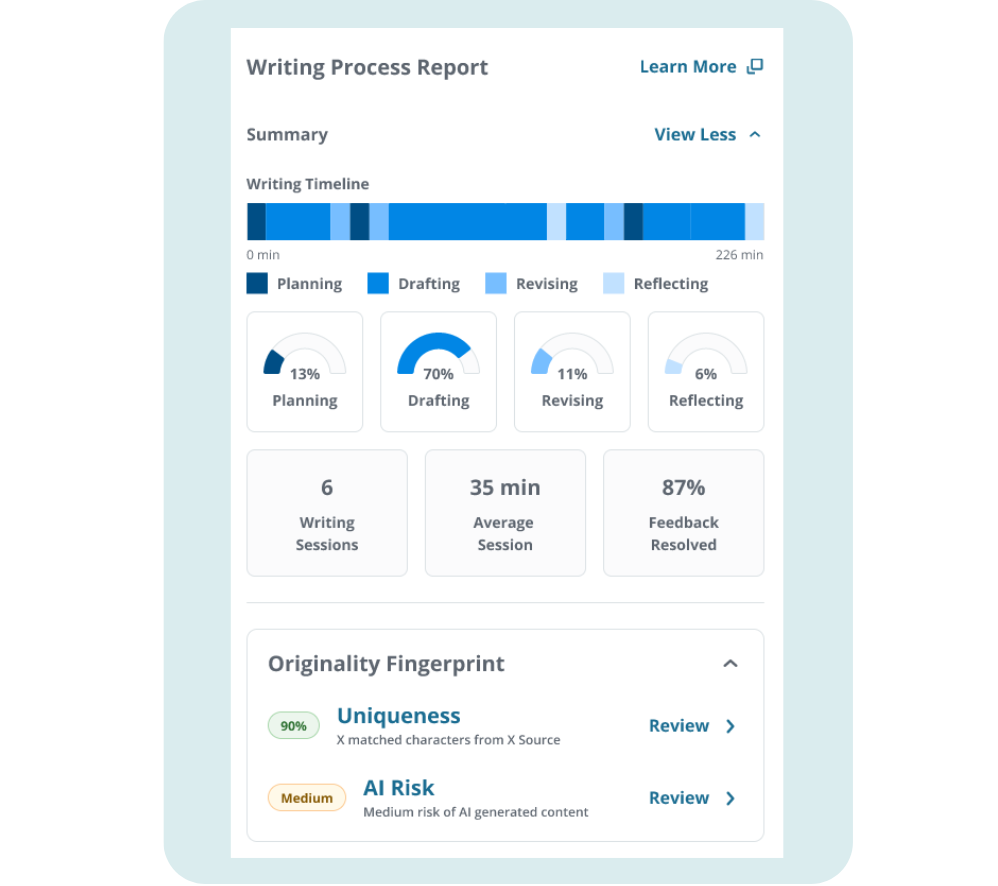

The most successful institutions are prioritizing a culture of visible authorship by leveraging Packback Engagement Insights. This feature solves the “invisible learning” problem by giving instructors a granular view of the student journey. Instead of guessing if a final paper is authentic, faculty use the Writing Process Report to see exactly how a student built their argument over time.

This shift addresses the primary concerns of all four campus mindsets:

- Provosts see improved student outcomes without the governance risk of false AI accusations.

- CIOs benefit from low-friction tools built on existing LMS rails and a secure data posture.

- Faculty save time while making meaningful writing assignments work at scale.

- Students feel supported rather than surveilled, as their individual effort is finally being tracked and valued.

When we use tools like the Writing Process Report, we give students the power to prove their authorship.

Recent Articles

-

Visible Thinking is the New Standard for Institutional Academic Integrity

-

Why Peer Review Belongs in the Future of Writing Instruction

-

The AI Trust Gap in Higher Ed: Why Student AI Use Is More Complicated Than It Seems

-

How one CSU Professor Rebuilt Student Trust by Shifting from Policing AI to Teaching with It