How one CSU Professor Rebuilt Student Trust by Shifting from Policing AI to Teaching with It

Most faculty fear AI grading is coming for their red pen, or worse: replacing their pedagogical voice. Dr. Heidi McLaughlin was tired of playing detective. Here is how she stopped fighting AI and started redesigning her rubrics to reward the “messy middle” of student authorship.

Playing AI Detective is Exhausting

The conversation around AI grading in higher education is fraught with tension. Many instructors worry that shifting toward AI grading and feedback tools means sacrificing the human touch. But, when we reposition the technology as a Coach rather than a Cop, it becomes a bridge to deeper student engagement.

For the last two years, college grading has increasingly felt less like teaching and more like forensic detective work. You find yourself staring at a submission, scrutinizing the word choice, the suspiciously perfect transitions, and that uncanny “AI-voice.” You run it through a detector, get a “70% probability” result, and then the real headache begins: the confrontation, the denials, and the erosion of the very trust your classroom is built on.

It’s a cycle that leads to faculty burnout and student resentment. Dr. Heidi McLaughlin, an Associate Professor of Developmental Psychology at California State University, Bakersfield, reached that breaking point early. She realized that as long as we continue to treat the “final PDF” as the only thing that matters, we are essentially incentivizing students to use AI to bypass the struggle of learning.

She decided to stop fighting the technology and start changing the rules of engagement.

Retiring Your Pre-2020 Rubrics

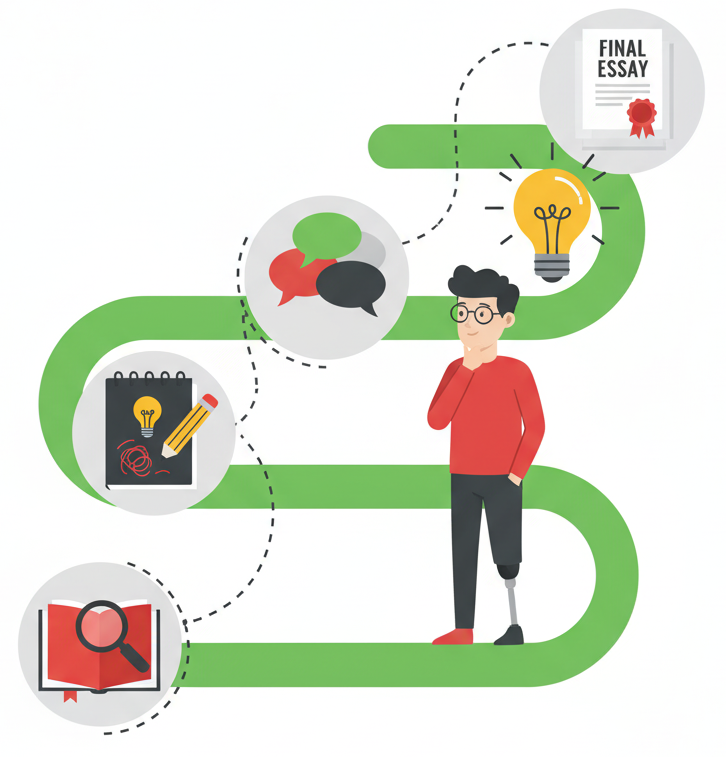

The fundamental flaw in our current assessment model is that it rewards the “view” rather than the “climb.” In a pre-generative AI world, a polished essay was a reliable signal that a student had done the hard work of research, drafting, and critical thinking. Today, that signal is broken. An AI can provide the “view” in seconds without the student ever taking a single step up the mountain.

“You can’t use a 2019 rubric for a 2026 assignment,”

says Dr. McLaughlin.

“We have to adjust our rubrics to actually

reward the process of revision and critical thinking,

rather than just grading a polished PDF.”

Dr. McLaughlin’s shift was philosophical as much as it was tactical. If the final product is no longer a proxy for learning, the process must become the product. She realized that to preserve academic integrity, she had to move the grade away from the result and toward what Packback calls the “Messy Middle” which is the visible timeline of how an idea evolves from a rough thought into a reasoned argument.

How to Reward Process-Based AI Grading

When we talk about AI grading, we aren’t talking about an algorithm making a final judgment. We are talking about instrumenting the entire writing process. For a Provost, this means improved retention without governance risk. For a student, it means no longer feeling like they are being watched by a ‘surveillance’ algorithm. Instead, the student sees the AI grading tool as a coach that guides them through outlining, drafting, and revising in real-time.

So, what does this look like in a senior seminar or a research lab? Dr. McLaughlin moved away from “AI-proofing” and toward “AI-integrating.” Here is the tactical breakdown of her approach:

1. The Comparative Analysis Rubric

Instead of banning GenAI tools, Dr. McLaughlin requires it. In one assignment, students must generate an AI response to a prompt and then write their own version. The grade isn’t based on which one is “better.” Instead, students are evaluated on their ability to critique the AI: Where did it hallucinate? Where was it shallow? How does their human voice add nuance that the machine missed?

2. Value the “Substance of Change”

Dr. McLaughlin’s rubrics now look for the evolution of a thought.

“I try to assess what they change over time,” she explains.

She looks for the change from the AI to the final product by evaluating thinking process cues like:

- Did the student delete the AI’s generic filler and fluff?

- Did they add specific examples from their own empirical research?

- Did they adjust the tone to reflect their own authorial voice?

3. Make the Thinking Process Visible

By using tools that instrument the writing process, Dr. McLaughlin can see a student’s “Authorship Timeline.” She isn’t looking for a “100% human” score from a black-box detector. Instead, she is looking for a student who can show their work.

When a student can demonstrate that they took an AI draft, wrestled with it, revised it, and cited their sources, they are meeting the 21st-century definition of literacy.

How Process-Based Grading Rebuilds Student Trust

The most profound impact of this shift was the culture. When you stop leading with suspicion, the temperature in the classroom drops. Many students are currently hiding their process out of fear of false accusations, which only creates more “metacognitive laziness.”

When Dr. McLaughlin told her students, “Yes, copy and paste the AI, and then show me how you made it better,” the anxiety vanished. Students stopped looking for ways to “beat the system” because the system was now designed to support their actual growth.

By redesigning her rubrics to celebrate the messy middle, Dr. McLaughlin didn’t just solve an academic integrity problem; she built a more engaging, trusting environment where students feel safe to fail, revise, and ultimately, think for themselves.

The Bottom Line: As a company full of former educators, we aren’t here to catch students; we are here to make their thinking visible. We protect integrity AND restore the joy of teaching when we grade the authorship process.